Ethics right down to the engine room

This is how we address power dynamics, bias, and accountability in our use of AI, data, and digital tools.

When we develop digital tools for citizen engagement, analyze qualitative data using AI, or visualize thousands of voices on a single map, we make choices. Choices about who can participate, what counts as knowledge, which patterns become visible, and which ones disappear. None of these are neutral or purely technical choices. They are ethical and political, and they have direct consequences for which voices are heard and which decisions are made based on our work. Ethics is therefore not an add-on to what we do. It is a prerequisite that is baked into the very engine room from the start.

Our approach to ethics and technology

Responsible use of AI doesn’t come from a checklist. It comes from a culture where the tough questions are asked again and again—about bias, about power, about who is heard and who is overlooked. For us, ethics isn’t a layer you slap on top of a finished product. It’s an ongoing practice, a capacity for critical reflection that must be trained and actively used in everyday life to stay sharp. Here’s what defines our approach.

-

We don’t start a project and add ethical considerations afterward. From the very first design decision, we consider what our tools can and cannot be used for. Faces are automatically blurred in Involve, users have full control over their data, and commercial entities cannot use the material we collect and process for training purposes when we use the Model for wicked for analysis.

-

Our tools were not developed in isolation. The Involve app is built on an open-source code base from the Urban Belonging app, which was developed in collaboration with researchers and seven minority groups in Denmark and won the EU Citizen Science Award 2023. And the app has been tested in practice by a broad user group through an open invitation to everyone who subscribes to INVI’s newsletter. The model for wicked was developed with an independent advisory group of experts from research, civil society, and the business community. Those who will use our tools and can challenge them have been involved from the very beginning.

-

Our team is multidisciplinary and diverse, and the tools we build reflect the people who create them. In the research we conduct, we make a point of including the full range of affected voices. The most critical insights into a problem often come from those who live with it, not from those who manage it.

-

We regularly test our methods with external researchers and publish the results. This isn’t quality assurance based on a checklist. It’s quality assurance as an ongoing practice that ensures we are constantly challenged and informed by the work of the most talented researchers in the field of AI.

-

We are transparent about the technological dependencies on which our work relies—including which models we use and what we cannot see into. Our response to the lack of transparency and explainability that often accompanies the use of large language models in particular is not to turn a blind eye, but to actively contribute to providing the field with better tools for evaluating and comparing models.

We ground our ethics work in research

Engaging with ethical dilemmas becomes more effective when it is subjected to critical scrutiny from outside. We therefore seek out research communities both in Denmark and abroad that are at the forefront of work on technology, power, and democracy, and apply those insights to our practice.

See examples of projects and research visits below.

If you have an idea for a workshop, a research project, or a discussion that could help us better understand complex issues surrounding AI and ethics, please write to us! We’d love to hear what your idea is and see if we can come up with something that will help us all learn more together.

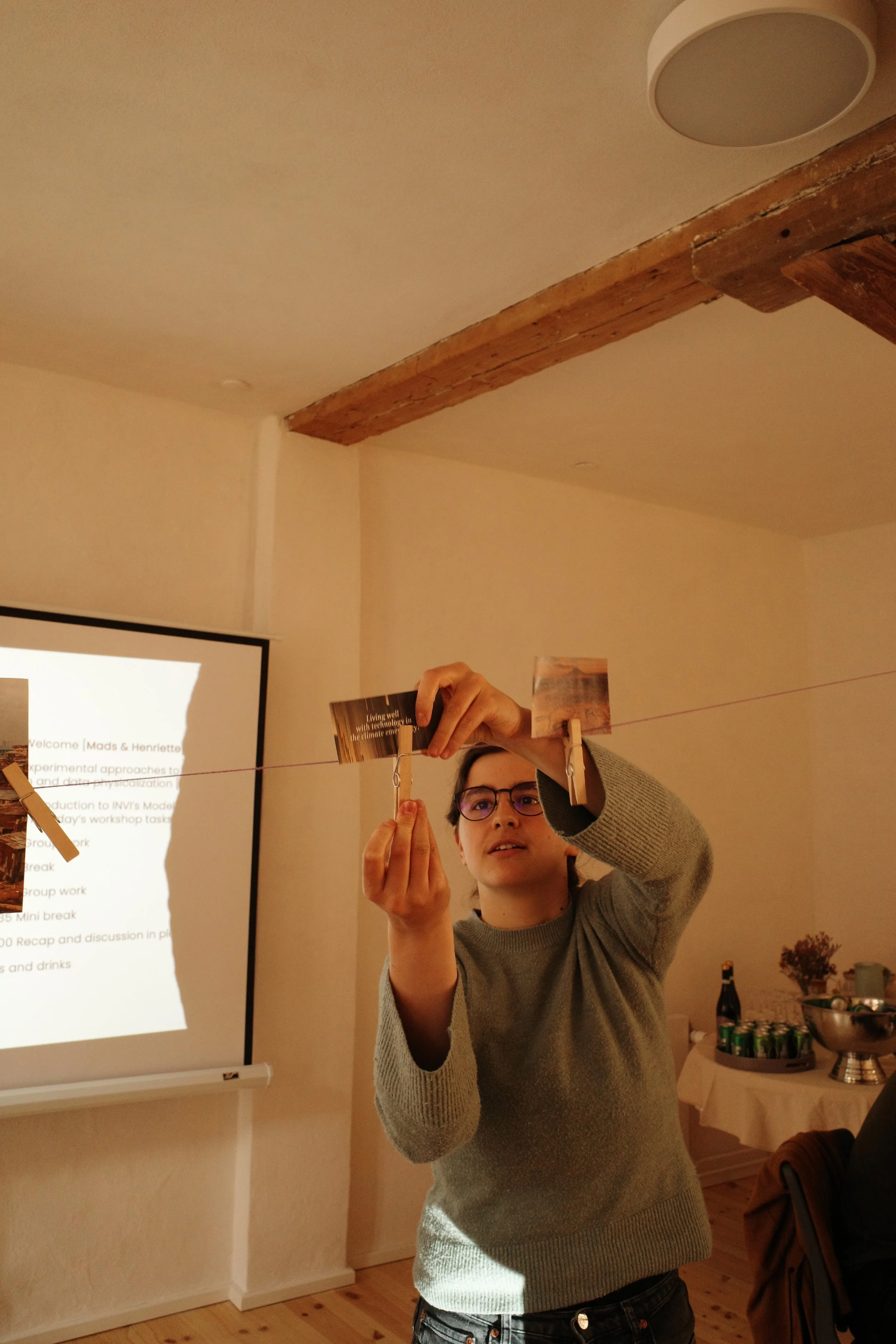

Snapshots

Photos from the “Doing AI Otherwise” workshop series with ITU’s ETHOS Lab.

Where do we find inspiration?

Our approach to data ethics was not developed in a vacuum. We draw on a range of critical and feminist research traditions that have challenged the notion of technology as neutral and objective.

-

Building on the work of Catherine D'Ignazio and Lauren Klein, we ask critical questions about power dynamics and discrimination in data-driven projects. Who is represented in our data—and who is excluded? Who benefits from the insights we produce? How can we use data positively to challenge, rather than reinforce, established categorizations and entrenched binary classifications? These are questions that shape our design from the outset.

See Data Feminism (2020) here, and find inspiration in the work of the Data + Feminism Lab at MIT here. -

Inspired by Sasha Costanza-Chock’s framework, we are working to ensure that the people who most often bear the consequences of technological decisions also have a real say in how our analytical tools and data projects are designed and used to inform decision-making.

When we’re about to launch an analysis project on Ungeløftet, we make sure, for example, to involve the young people themselves in evaluating the questions we ask before we begin the study. And when we develop digital tools such as the Involve app, we make sure to test accessibility and usability with different user groups and incorporate their needs and feedback into the tool’s development.

Find *Design Justice* (2018) as an open-access resource at MIT Press here.

-

We recognize that data are not raw facts, but are always already interpreted constructs shaped by the systems, categories, and power relations within which they arise. Within Science and Technology Studies (STS) and critical data studies, it is well documented how technological systems embed and reinforce existing social hierarchies—often invisibly and seemingly objectively. This makes us more cautious about what we conclude and more explicit about our methodological choices.

We draw particularly on: Kate Crawford’s *Atlas of AI* (2021), which maps the political, environmental, and social costs of AI infrastructure; *Raw Data is an Oxymoron* (ed. Gitelman, 2013), which demonstrates how data is always constructed rather than given; Meredith Broussard’s Artificial Unintelligence (2018) on the systematic blind spots in technological problem-solving; as well as classic STS contributions from Sheila Jasanoff on sociotechnical imaginaries and Langdon Winner’s question of whether artifacts have politics.

-

Data visualizations are never neutral representations of reality. They are always the result of choices: about what is included and excluded, how phenomena are defined and named, and which perspectives are prioritized. Within critical cartography and cultural geography, it is well-documented that even seemingly objective maps and visualizations are political documents that shape what we perceive as natural, true, and possible. This applies no less to the large, plotted maps we produce when visualizing thousands of citizens’ and practitioners’ statements about social problems. Which voices end up at the center of the map, and which are placed on the periphery? Which statements do we cluster together, and what do we lose when we do so?

We draw in particular on Martin Dodge, Rob Kitchin, and Chris Perkins’ *Rethinking Maps* (2009), which demonstrates how maps never merely depict the world, but actively construct it through the choices made in the process; Denis Wood’s The Power of Maps (1992), which examines how the map’s apparent objectivity conceals the interests and perspectives that have shaped it; as well as J.B. Harley’s Deconstructing the Map (1989), which, drawing inspiration from Foucault and Derrida, opens the door to a critical reading of what a map includes, excludes, and normalizes. Harvey’s broader work on how space and representation are inextricably linked to power further informs our awareness that it is never a matter of chance which understandings of a problem find their way into our visualizations.

This compels us to continually ask: What do our visualizations obscure? What categories and boundaries have we chosen, and what would we have seen if we had chosen differently?

We share our knowledge in a masterclass

We believe that ethical work with AI should not be reserved for researchers or organizations with ample resources to give it thorough consideration. That is why we offer AI Ethics in Practice; a course developed and taught by INVI’s Chief Analyst, Sofie Burgos-Thorsen, in collaboration with researcher Tess Skadegaard Thorsen, which provides leaders and others responsible for the use of AI in their organizations with concrete skills and tools to identify and address ethical dilemmas in the use of AI.

The development of the course is supported by the Danish Data Science Academy and will be offered for the first time in the spring of 2026.